The Next Three Years of AI Won’t Look Like Science Fiction. They’ll Look Like Infrastructure.

A grounded forecast for 2026–2029, written at the inflection point.

As of May 2026, the dominant popular narrative about artificial intelligence remains cinematic: a singular, godlike system “wakes up,” and everything changes overnight. That narrative is wrong—not because the technology is overhyped, but because it misidentifies the shape of the transformation. The next three years of AI will not produce a clean protagonist. They will produce operational infrastructure: agents embedded inside companies, targeting pipelines inside militaries, automated cyber tools probing networks at machine speed, simulation-trained robots entering factories, AI-designed drug candidates entering human trials, synthetic media saturating information ecosystems, and intelligence layers quietly wiring themselves into everything.

The dangerous part is not one magic model. It is coordination at scale—many specialized models connected to tools, sensors, databases, robots, markets, weapons systems, and bureaucracies, each individually modest, collectively reshaping the tempo at which institutions operate and compete.

What follows are the ten most realistic cutting-edge AI developments I expect by 2029, grounded in what is already working, already funded, and already being deployed—not what makes for good keynote slides.

The 10 Most Realistic AI Developments Coming by 2029

1. AI Agents Become the New White-Collar Operating Layer

This is the most practical and most likely near-term shift. Not “chatbots” with better manners, but agents—systems that can use tools, search databases, generate reports, file tickets, write and review code, update CRMs, schedule meetings, draft legal and compliance documents, monitor workflows, and escalate only when stuck. The distinction matters: a chatbot answers questions; an agent executes processes.

Microsoft’s 2025 Work Trend Index explicitly frames this as the rise of the “Frontier Firm,” a new organizational archetype in which employees manage hybrid human-agent teams and agents become embedded participants in normal business operations. McKinsey’s 2025 survey echoes the trajectory but reveals the friction: agentic AI is proliferating in pilots, yet most companies remain trapped in the pilot-to-scale transition—struggling with integration, trust, governance, and the organizational redesign that real deployment demands. That means the next three years are not about inventing agents from scratch. They are about wiring agents into boring, high-value business processes and surviving the institutional turbulence that follows.

Takeaway: By 2029, the winning companies will not be the ones “using ChatGPT.” They will be the ones that have fundamentally redesigned org charts around AI-managed workflows. Middle management, analysts, junior consultants, support operations, quality assurance, recruiting, compliance, procurement, and software maintenance sit squarely in the blast radius. The firms that delay reorganization won’t just fall behind—they’ll find themselves structurally unable to compete on cost or speed with firms that moved early.

2. Autonomous Coding Evolves from Autocomplete to Software Maintenance Factories

AI-assisted coding has already moved past autocomplete. The next phase involves agents that can inspect an entire codebase, reproduce bugs, write patches, execute test suites, open pull requests, document changes, and monitor production regressions autonomously. DARPA’s AI Cyber Challenge demonstrated that autonomous cyber reasoning systems can find and patch real vulnerabilities in open-source software tied to critical infrastructure—not in theory, but in competitive, adversarial conditions.

The realistic trajectory by 2029 is not “AI replaces all software engineers.” It is one senior engineer supervising a swarm of coding agents that execute migrations, generate tests, upgrade dependencies, fix vulnerabilities, and build internal tooling. The economics are straightforward: if an agent can handle 60–80% of routine maintenance work at a fraction of the cost, the financial pressure to adopt becomes irresistible.

Takeaway: Software engineering is becoming more like architecture—review, systems thinking, judgment, and taste. The practitioners who only translate tickets into code are in the blast radius. The ones who can design systems, evaluate tradeoffs, and orchestrate machine-speed development pipelines will be more valuable than ever. The profession doesn’t die; it stratifies violently.

3. Cybersecurity Becomes AI-versus-AI, and Offense May Temporarily Outrun Defense

This is one of the most consequential and least glamorous near-term arenas. AI will drastically lower the skill threshold for phishing, reconnaissance, exploit chaining, vulnerability discovery, malware polymorphism, and social engineering. The Center for Strategic and International Studies recently argued that the near-term reality is less “fully autonomous cyberattack robot” and more that AI accelerates and democratizes existing attack methods—making mediocre attackers significantly more effective and making sophisticated attackers faster.

Simultaneously, defenders will deploy AI for log analysis, automated patching, incident triage, threat hunting, and proactive vulnerability discovery. CISA, NSA, the FBI, and allied agencies have already issued joint AI security guidance, and DARPA’s AI Cyber Challenge is explicitly building the foundation for automated vulnerability detection and remediation at scale.

But there is an asymmetry problem. Offense is inherently easier to scale: one exploit can be reused millions of times, while defense must be correct everywhere, all the time. AI amplifies this asymmetry before it corrects it.

Takeaway: Between now and 2029, the internet becomes meaningfully more hostile. The biggest winners in this environment will be governments, hyperscalers, major banks, and defense contractors that can afford AI-native security operations centers. The weak underbelly—small businesses, municipalities, hospitals, schools, and local governments—will face a threat environment that outstrips their capacity to respond. Expect a wave of catastrophic breaches in exactly the institutions least equipped to absorb them.

4. Military AI Becomes Operational, Not Experimental

This is where “money is no object” exerts its most direct influence. The Pentagon has crossed the threshold from exploratory AI pilots to classified integration with major technology companies. Recent reporting confirms that the Department of Defense maintains agreements with Google, Microsoft, AWS, Nvidia, OpenAI, Reflection AI, and SpaceX to embed AI tools into classified military systems—spanning decision support, target identification, predictive maintenance, logistics optimization, and battlefield analysis.

RAND’s recent work frames the future of military AI around four structural competitions: quantity versus quality, hiding versus finding, centralized versus decentralized command, and cyber offense versus cyber defense. This is the right analytical lens. AI does not simply make a missile “smarter.” It transforms the tempo of war itself: detection, deception, targeting, logistics, electronic warfare, and command decisions compress into shorter and shorter cycles. The military that operates inside a faster decision loop possesses a potentially decisive advantage—a dynamic theorists have understood since John Boyd, but that AI makes operationally real.

Takeaway: By 2029, the most consequential military AI will not resemble a humanoid Terminator. It will be sensor fusion plus autonomous drones plus targeting recommendation engines plus cyber operations plus logistics optimization, all operating within integrated kill chains. Human approval may remain formally required, but the machine will increasingly define the menu of choices—and the menu shapes the decision far more than the final click.

5. Drone Swarms and Cheap Autonomous Systems Become Strategically Decisive

The cutting edge of military AI is not only exquisite billion-dollar platforms. It is also cheap autonomy: expendable drones, loitering munitions, maritime autonomous systems, persistent surveillance platforms, and disposable robotics coordinated by AI at speeds human operators cannot match.

The logic is brutal and elegant: AI makes quantity more useful. A military that can deploy thousands of autonomous systems, process terabytes of sensor data in real time, and overwhelm slower human-controlled decision loops gains an advantage that superior individual platforms cannot offset. RAND’s “quantity versus quality” framing captures this structural shift precisely. The industrial era rewarded mass production of standardized weapons. The AI era rewards mass coordination of adaptive systems.

Takeaway: By 2029, every serious military on Earth will be racing toward AI-assisted drone swarms, counter-swarm defense systems, autonomous logistics, and battlefield perception layers. This is not speculative futurism. Ukraine has already fundamentally altered the world’s understanding of what cheap drones can accomplish against conventional forces. AI is the next multiplier—and every general staff on the planet is paying attention.

6. Humanoid and General-Purpose Robots Enter Constrained Real Workplaces

Humanoid robots remain overhyped in the popular press, but they are no longer pure theater. Figure AI reported that its Figure 02 robots completed 10-hour shifts at a BMW manufacturing facility, loaded more than 90,000 parts, accumulated over 1,250 hours of operational runtime, and contributed to the production of more than 30,000 BMW X3 vehicles. These are not demo-day stunts. They are early production metrics.

That does not mean a domestic robot butler is arriving in 2028. It means factories, warehouses, laboratories, hospitals, and military logistics sites will begin deploying embodied AI in narrow but economically meaningful roles—roles defined not by versatility, but by the willingness of the environment to accommodate the robot’s limitations.

Takeaway: By 2029, the realistic breakthrough is not a general-purpose humanoid that navigates your kitchen. It is robots that can perform a rotating set of dull, repetitive, semi-structured physical tasks in environments specifically designed or adapted for them. Manufacturing lines, warehouse fulfillment centers, elder-care logistics, commercial cleaning, infrastructure inspection, and defense logistics are the beachheads. The lesson of every prior wave of automation applies: the environment adapts to the machine before the machine fully adapts to the environment.

7. World Models and Simulation Become the Bridge from Digital AI to Physical AI

World models may be the single most important “quiet” breakthrough of this period. They allow AI systems to simulate environments, predict consequences of actions, and train agents across millions of synthetic scenarios before any deployment in the physical world. Google DeepMind describes its Genie 3 system as a world model capable of generating interactive environments and training agents through rich simulated curricula. Nature recently highlighted world models as one of AI’s most significant new frontiers for robotics and real-world capability.

The reason this matters is economic and practical: real-world data is expensive, slow, and dangerous to collect. You cannot crash ten thousand robots into factory equipment to teach them spatial awareness. You cannot expose surgical robots to every possible complication in live patients. Simulation lets agents practice at scale, fail cheaply, and arrive at deployment with a foundation of synthetic experience that would take decades to accumulate in physical reality.

Takeaway: The public will continue to focus on chatbots and image generators. The serious laboratories will increasingly focus on simulation, reinforcement learning, robotics, and tool-using agents trained in synthetic environments. World models are the connective tissue between language-model intelligence and physical-world competence. Whoever masters this pipeline—simulation to transfer to deployment—controls the next decade of embodied AI.

8. AI-Designed Drugs and Biotech Enter the Clinical Proof Era

AI has already transformed structural biology; AlphaFold’s protein-structure predictions are now standard infrastructure in laboratories worldwide. The next step is drug design and biological experimentation. Isomorphic Labs, the DeepMind spinoff, is preparing human clinical trials for AI-designed drug candidates, including oncology programs that moved from computational prediction to preclinical validation at unprecedented speed.

The realistic three-year trajectory is not “AI cures cancer.” It is AI compressing early-stage discovery: target identification, molecule generation, protein-ligand interaction modeling, toxicity prediction, clinical trial design optimization, and laboratory automation. Each step shaved from a process that historically takes 10–15 years and costs $1–2 billion per approved drug represents enormous value—even if the downstream clinical bottlenecks remain stubbornly human-paced.

Takeaway: By 2029, AI will be taken seriously as a core component of the drug discovery engine, but clinical validation will remain the binding constraint. Biology is not software; molecules that look perfect in silico fail in living systems for reasons we still barely understand. The real acceleration comes when AI-driven design is paired with robotic wet labs and faster experimental feedback loops—closing the gap between prediction and physical reality. That convergence is beginning now.

9. Compute Infrastructure Becomes Geopolitical Infrastructure

AI progress over the next three years depends heavily on a physical substrate that is easy to forget in conversations about algorithms: chips, energy, datacenters, networking, memory bandwidth, cooling systems, and inference cost curves. Nvidia’s Rubin platform, announced for 2026, promises major reductions in inference token cost and fewer GPUs required to train large mixture-of-experts models compared with its Blackwell predecessor.

This matters because cheaper inference changes what is economically possible. When the cost per token falls by an order of magnitude, companies can run more agents, sustain longer context windows, deploy continuous monitoring systems, process real-time multimodal data streams, and embed AI inside more products. Inference cost is the tax on every AI application; reducing it unlocks categories of use that were previously uneconomical.

Takeaway: AI is becoming an energy-and-industrial-base race. Datacenters are the new strategic factories. The countries and companies that control advanced chip fabrication, sufficient power generation, efficient cooling infrastructure, high-bandwidth fiber networks, and top-tier model talent control the AI deployment layer—and, by extension, an increasing share of economic and military capability. The semiconductor export controls, the datacenter buildout wars, the nuclear-power-for-AI deals—these are not sideshows. They are the main event.

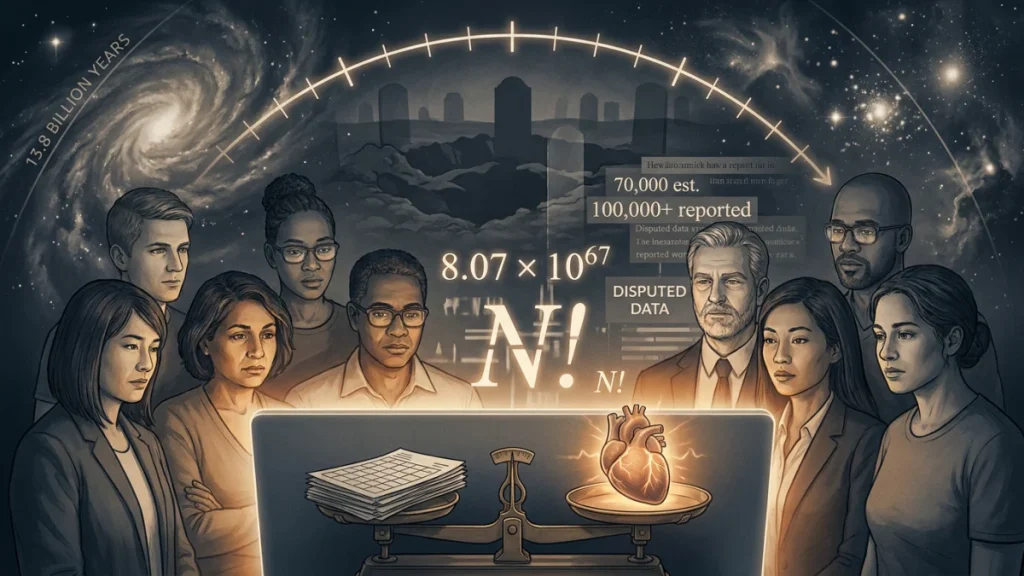

10. Synthetic Media, Personalized Manipulation, and Epistemic Collapse Become Normal

This is the social dystopia lane, and it is already well underway. Generative AI makes it cheap and trivial to produce endless text, photorealistic images, convincing video, voice clones, fake local news outlets, synthetic influencer personas, bot-driven social media campaigns, targeted propaganda, legitimate-looking legal documents, and emotionally optimized persuasion at industrial scale.

The International AI Safety Report identifies risks spanning malicious use, disinformation, cyberattacks, labor market disruption, and broader systemic harms to democratic institutions. NIST’s generative AI risk profile emphasizes challenges around hallucination, content provenance, security vulnerabilities, and systematic misuse potential.

But the deepest risk is not any individual piece of synthetic content. It is the cumulative epistemic effect.

Takeaway: By 2029, the core problem will not be “Can AI produce convincing fake videos?” Everyone will know it can. The real crisis will be epistemic exhaustion—the point at which ordinary people stop trying to determine what is real, not because they are foolish, but because the cost of verification exceeds the cognitive budget of daily life. That exhaustion benefits authoritarians, scammers, propagandists, monopolists, and anyone with enough resources to flood the information zone. Trust becomes the scarcest commodity in the economy—and the most exploitable.

The Blunt Ranking: Likelihood and Impact by 2029

| Rank | Development | Likelihood by 2029 | Impact |

|---|---|---|---|

| 1 | AI agents in enterprise workflows | Very High | Very High |

| 2 | AI coding and software maintenance factories | Very High | Very High |

| 3 | AI cyber offense/defense escalation | Very High | Very High |

| 4 | Military AI decision support and targeting pipelines | High | Extreme |

| 5 | Drone swarms and autonomous battlefield systems | High | Extreme |

| 6 | Synthetic media, surveillance, and epistemic manipulation | Very High | High |

| 7 | World models and simulation-to-reality pipelines | High | Very High |

| 8 | Humanoid and general-purpose robots in constrained roles | Medium-High | High |

| 9 | AI-designed drugs entering clinical validation | Medium-High | High |

| 10 | Compute infrastructure as geopolitical leverage | Very High | Extreme |

The Uncomfortable Throughline

Read across these ten developments and a single pattern emerges: AI is not arriving as a product. It is arriving as an operating layer. It will sit beneath enterprise workflows, inside military kill chains, behind cybersecurity perimeters, within drug discovery pipelines, underneath robotic control systems, and throughout the information environment. Most people will not interact with “AI” as a distinct, visible thing. They will interact with systems that are faster, cheaper, more persuasive, more relentless, and more opaque than what came before—often without fully understanding why.

The winners in this landscape will not be those who build the single best model. They will be the institutions—companies, militaries, governments, research labs—that learn to orchestrate many models, connected to real-world tools, operating at machine speed, governed by humans who still understand what the systems are doing and why.

The losers will be those who wait for the movie version—the clean, singular moment when “AGI arrives” and the future clarifies. That moment is not coming. The future is arriving unevenly, operationally, and in pieces, and by the time it is legible to everyone, the structural advantages will already be locked in.

The next three years are not a spectacle. They are a reorganization. Act accordingly.

Further Reading

- Microsoft Work Trend Index 2025: “The Year the Frontier Firm Is Born”

Useful for understanding AI agents in workplaces, “human-agent teams,” and how companies may reorganize around AI. - DARPA: AI Cyber Challenge Marks Pivotal Inflection Point for Cyber Defense

Important for autonomous cyber defense, vulnerability discovery, and automated patching. - CSIS: Beyond Autonomous Attacks — The Reality of AI-Enabled Cyber Threats

Strong sober analysis of how AI is changing cyber threats without relying on sci-fi assumptions. - CISA: Best Practices Guide for Securing AI Data

Useful government guidance on securing data used to train and operate AI systems. - Associated Press: U.S. Military Reaches Deals With 7 Tech Companies to Use Their AI on Classified Systems

Key source for the military-AI operationalization angle. - RAND: How Could Artificial Intelligence Shape the Future of War?

One of the better frameworks for understanding AI’s military impact: quantity vs. quality, hiding vs. finding, centralized vs. decentralized command, and cyber offense vs. defense. - Figure AI: Figure 02 Contributed to the Production of 30,000 Cars at BMW

Useful source for practical robotics deployment in constrained industrial environments. - Google DeepMind: Genie 3 — A New Frontier for World Models

Important for understanding world models, simulation, embodied AI, and training agents in interactive environments. - International AI Safety Report 2025

Broad, serious source on advanced AI capabilities, risks, malicious use, safety, and systemic impacts. - NIST: Artificial Intelligence Risk Management Framework — Generative AI Profile

Useful for a governance/risk section, especially around hallucination, provenance, misuse, and security. - NVIDIA: Rubin Platform Announcement

Useful for the compute/infrastructure angle: inference costs, chips, datacenters, and AI industrial capacity.